Resume Parsing Developer document

Resume Parsing — Production System Reference

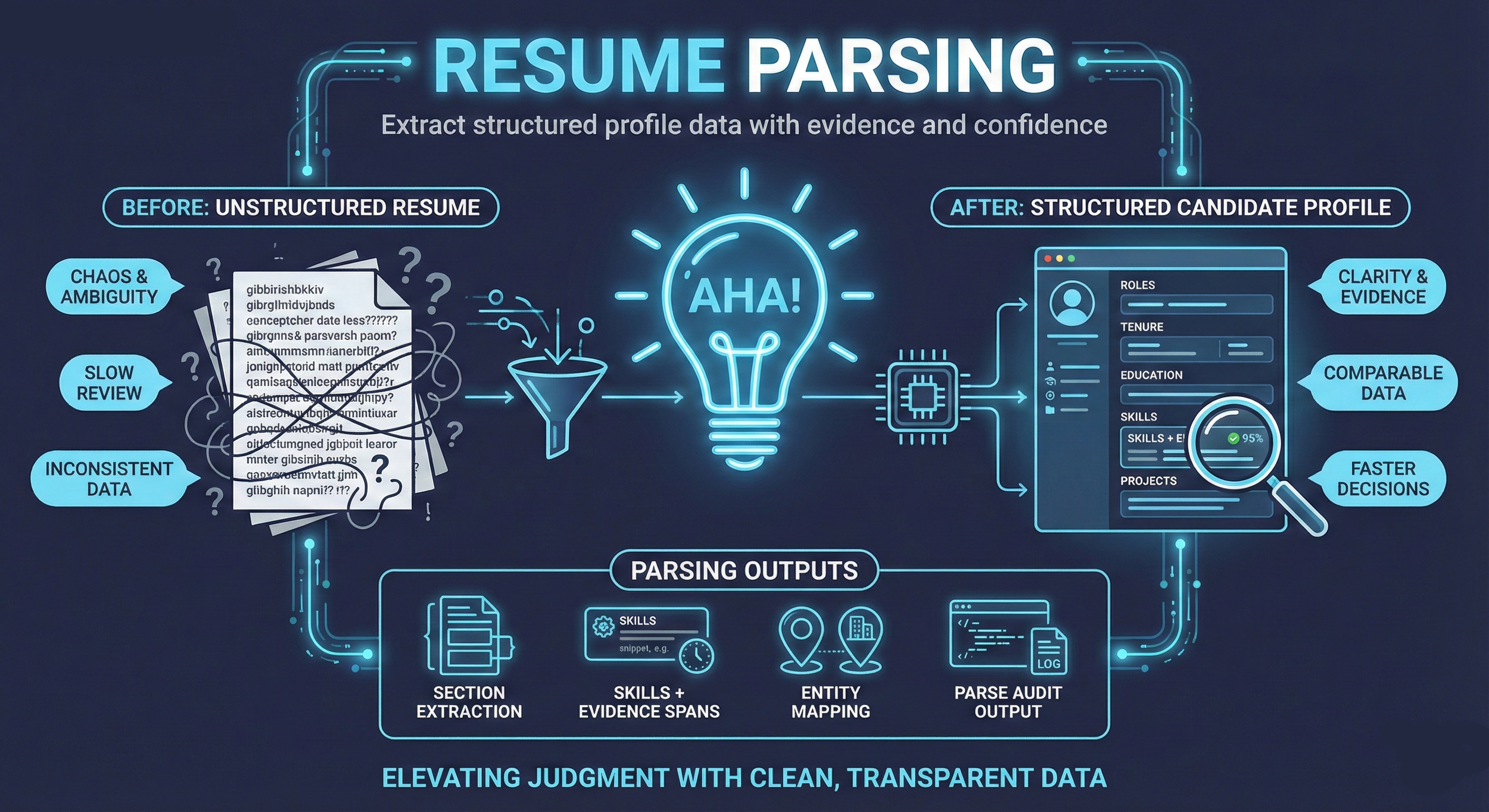

This document describes the Intelletto.ai production pipeline for converting unstructured candidate artifacts into high-fidelity, scoring-ready data. The pipeline is orchestrated by Netflix Conductor with workers deployed on Google Cloud Run. The focus is on auditability, cost-efficiency, and evidence-backed extraction.

Document Overview

- Module: Resume Parsing Pipeline

- Version: 1.0.0

- Last Updated: 2026-04-04

- Pipeline Version: v3

- Audience: Backend, Data, and AI Engineering

Core Objectives

The pipeline transforms PDFs, DOCX files, and raw text into an immutable Intermediate Representation (IIR) characterized by:

- Explainability: Every extracted fact links to a specific source segment or page.

- Determinism: Predictable behaviors with idempotent processing and explicit retry logic.

- Cost Management: Stage-level attribution for token usage and model invocations.

- Auditability: Versioned records with a full provenance trail.

Technical Stack

| Component | Specification |

|---|---|

| Primary API | FastAPI (Python 3.11+) on Google Cloud Run |

| AI Runtime | Google Gemini 2.5 Flash via google-genai Python SDK with response_schema enforcement |

| Extraction Method | Direct PDF upload to Gemini (not OCR-to-text prompting). Schema constrains output at API decoding level. |

| OCR / Layout | Google Document AI Enterprise Document OCR (fallback for layout evidence) |

| Database | PostgreSQL 16+ on Google Cloud SQL (asia-southeast1) via psycopg3 async connection pool |

| Storage | Google Cloud Storage (intelletto-ai-resume-parse-404886655151) |

| Pipeline Orchestration | Netflix Conductor OSS 3.15.0 on GCE (asia-southeast1-b). Workflows define stage ordering, decision branches, retries, and rate limits. HUMAN task support for recruiter review gates. |

| Worker | Cloud Run service (intelletto-worker), HTTP-based task polling via httpx. Each stage is an async handler registered in worker_registry.py. |

| Rate Limiting | Conductor task-level: 30 RPM / 4 concurrent for Gemini stages. Python-side token bucket as secondary safety net. |

| Deployment | Cloud Run (asia-southeast1). API: 2 vCPU / 2Gi. Worker: always-on, min 1 instance. |

| Observability | Cloud Logging + structured pipeline_phase_event telemetry + Conductor workflow/task history |

v3 Architecture

The v3 codebase is domain-driven, replacing the v2 monolith (intelletto_server.py, retained as reference only).

| Module | Path | Responsibility |

|---|---|---|

| Entry Point | v3/main.py |

Uvicorn entry, app bootstrap |

| App Factory | v3/src/api/app.py |

FastAPI app creation, middleware, route mounting |

| Pipeline Domain | v3/src/domains/pipeline/ |

Conductor adapter (conductor/): client, poller, worker_registry, worker_adapter. Stage runners. |

| Pipeline Stages | v3/src/domains/pipeline/stages/ |

12 stage modules: registration, dedup, ocr_layout, data_cleaning, extraction, normalization, enrichment, validation, scoring_inputs, gcs_archive, scorecard, artifacts |

| Scoring Domain | v3/src/domains/scoring/ |

ScoreService, ConfigService, interview brief, authenticity, bias audit, comp inference, JD calibration, sector classification, cross-JD fit |

| Intake Domain | v3/src/domains/intake/ |

Resume registration, GCS import, document management |

| JD Domain | v3/src/domains/jd/ |

Job description management, AI JD generation |

| Candidates Domain | v3/src/domains/candidates/ |

Candidate profiles, cover letters |

| Gemini Integration | v3/src/integrations/google/gemini.py |

Gemini 2.5 Flash client with response_schema |

| GCS Integration | v3/src/integrations/google/gcs.py |

Cloud Storage operations, RESUME-POOL structure |

| Document AI | v3/src/integrations/document_ai/ocr.py |

OCR and layout extraction |

| Batch Gemini | v3/src/integrations/google/batch_gemini.py |

Async batch processing (50% cost reduction, 200K requests/job) |

| Database | v3/src/database/pool.py, repository.py |

psycopg3 async connection pool, generic repository |

| Job Queue | v3/src/database/job_queue.py |

Async durable job queue for background processing |

Pipeline Stage Map (12 Stages)

The pipeline processes resumes through 12 stages orchestrated by Netflix Conductor. Three pipeline modes (A, B, C) are defined as Conductor workflow definitions that control which stages execute. Each stage is a Conductor task polled and executed by the intelletto-worker Cloud Run service. Conductor handles retry logic, rate limiting, timeouts, and decision branching (e.g., dedup stop, gate stop). A HUMAN task type enables recruiter review gates for borderline candidates.

| # | Stage Key | Human Label | Code Location |

|---|---|---|---|

| 01 | RESUME_REGISTERED |

Registered | stages/registration.py |

| 02 | DEDUP_CHECK |

Duplicate Check | stages/dedup.py |

| 03 | OCR_LAYOUT |

Reading Document Layout | stages/ocr_layout.py |

| 04 | DATA_CLEANING |

Cleaning Extracted Text | stages/data_cleaning.py |

| 05 | STRUCTURED_EXTRACTION |

Extracting Structured Resume | stages/extraction.py |

| 06 | NORMALIZATION |

Skill Normalization | stages/normalization.py |

| 07 | DATA_FUSION_ENRICHMENT |

Data Enrichment | stages/enrichment.py |

| 08 | VALIDATION_GATES |

Quality Gates | stages/validation.py |

| 09 | SCORING_INPUT_BUILD |

Score Preparation | stages/scoring_inputs.py |

| 10 | GCS_ARCHIVE_MOVE |

Move Source to Processed | stages/gcs_archive.py |

| 11 | SCORECARD_GENERATION |

Score Against JD | stages/scorecard.py |

| 12 | RECRUITER_ARTIFACTS |

Final Output | stages/artifacts.py |

Pipeline Modes

| Mode | Name | Stages Executed | Use Case |

|---|---|---|---|

| A | Pool Build | 01-06, 08-09 | Parse and normalize resumes into the RESUME-POOL without scoring. Terminal state: POOL_READY. |

| B | Pool Activation | 07, 10-12 | Activate pooled resumes against a JD: enrich, archive, score, build artifacts. |

| C | Direct JD | 01-12 | Full end-to-end: resume arrives with a JD assignment, all 12 stages run sequentially. |

RESUME-POOL GCS Structure

Resumes are organized in GCS under RESUME-POOL/ with JD code directories for assignment:

gs://intelletto-ai-resume-parse-404886655151/

RESUME-POOL/

{JD_CODE}/ # e.g., 59A356/

CV - Name - Title.pdf

CV - Name - Title.pdf

unassigned/

resume.pdfJD code is detected from the GCS directory path during import. The resume_document_job_map table links documents to job requisitions for scoring.

Contract Principles (Non-Negotiable)

These principles are enforced as hard contracts across parsing, normalization, and scoring. Each bullet links to the implementation clause(s) and the downstream acceptance gates.

- IIR schema is definitive and discoverable

- Evidence and page_map protocols are formalized

- Deterministic LLM invocation is enforceable

- Metering dictionary is pinned down

- Normalization/taxonomy ownership is unambiguous

- Scoring handoff contract is explicit

IIR schema section

Contract: The Internal Intermediate Representation (IIR) schema is the single source of truth for extraction output. It is versioned, discoverable, and must validate (schema-first; no best-effort).

Implemented in: Stage 05 (STRUCTURED_EXTRACTION) uses direct PDF upload to Gemini 2.5 Flash with response_schema parameter. The schema constrains output at API decoding level, guaranteeing skills always return as {technical: string[], soft: string[]}.

Enforced by: Validation gates (Gate B schema validation as a hard

pass/fail gate). Gate B now uses _partition_validation_issues() to separate blocking errors from advisory warnings. Blocking errors stop the pipeline.

Evidence/page_map section

Contract: Every extracted fact must be traceable to immutable source evidence, and every

pipeline run must persist a lossless page_map for round-trip regeneration.

Implemented in: Stage 03 (OCR_LAYOUT) via Document AI + Stage 05 evidence span protocol.

Enforced by: Validation gates (Gate A lossless coverage and Gate C evidence integrity). Gate C now uses _validate_extraction_evidence_contract() with a 20% per-section fact density floor.

Deterministic invocation policy

Contract: LLM invocation is deterministic and enforceable: model/version pinning, explicit tool parameters, bounded retries, and idempotent step execution (no hidden variability).

Implemented in: Gemini 2.5 Flash with temperature=0.1, response_schema enforcement, and extraction cached by checksum_sha256 scoped per tenant_id for cost efficiency. Same inputs produce same cached outputs.

Enforced by: Idempotency records (intelletto.idempotency_record) and replay checks within pipeline orchestrator.

Metering dictionary table

Contract: Metering is deterministic and auditable per step. The metering dictionary is pinned, versioned, and used to compute cost-per-resume without drift.

Implemented in: intelletto.pipeline_phase_event records per-stage telemetry including stage, outcome, error_code, error_message, started_at, completed_at, and duration. intelletto.scoring_run_metering records per-scorecard token counts, latency breakdowns, and DB write counts.

Normalization/taxonomy ownership statement

Contract: Ownership is unambiguous: the parsing pipeline produces taxonomy-resolved,

scoring-ready identifiers (and the taxonomy_snapshot_id it used). The scoring engine must not

"re-normalize" raw text; it consumes resolved IDs + confidence.

Implemented in: Stage 06 (NORMALIZATION) resolves skills against a taxonomy of 55,000+ hard skills, 63,000+ aliases, and 146 soft skills. Match types: EXACT, FUZZY, SEMANTIC. Certification-to-skill expansion via intelletto.certification_skill_map table.

Scoring handoff payload and acceptance gates

Contract: The parser hands off a single, explicit scoring payload that includes identifiers, evidence, and the snapshots used (IIR + taxonomy). Scoring begins only after all required gates pass.

Implemented in: Stage 09 (SCORING_INPUT_BUILD) creates an immutable intelletto.scoring_input_snapshot row. Stage 11 (SCORECARD_GENERATION) resolves published scoring config, loads JD + parsed resume + scoring input, and runs the 8-bucket weighted scoring model.

Minimum payload (scoring_input_snapshot columns):

{

"scoring_input_id": "uuid",

"pipeline_run_id": "uuid",

"parsed_resume_id": "uuid",

"taxonomy_snapshot_id": "uuid",

"iir_schema_version": "semver",

"evidence_pack_sha256": "sha256:...",

"normalized_skills_json": {...},

"work_history_json": {...},

"education_summary_json": {...},

"certifications_json": {...},

"languages_json": {...},

"all_gates_passed": true

}Section 0: Pipeline Stages (12-Stage Production Pipeline)

1) Intent

Define the exact end-to-end resume parsing pipeline stages (and their boundaries) so developers can understand each step, with deterministic cost metering, schema-first extraction, and evidence traceability as non-negotiable invariants.

2) Why it matters (risks mitigated / dependencies unlocked)

This section prevents the two most common failure modes in AI extraction projects:

- "Partial extraction" (teams ship a "good enough" model that silently drops content). This is unacceptable because Intelletto requires 100% coverage and resume regeneration.

- "Cost drift" (prompt changes, retries, or OCR changes quietly double cost-per-resume). Intelletto requires deterministic metering and enforceable gates.

It also unlocks downstream work: skills normalization, evidence-based scoring inputs, audit trails, and regression testing.

3) Definitions for AI-new developers (minimal, practical)

- Structured extraction: sending the PDF directly to Gemini 2.5 Flash with a

response_schemaparameter that forces the model to output JSON conforming to the declared schema at the API decoding level. - Schema validation gate: a hard pass/fail check (no "best effort"). Gate B uses

_partition_validation_issues()to separate blocking errors from advisory warnings. - Evidence: a pointer back to the source document. For Intelletto, evidence is page_index + bbox and/or text_span, always linked to a block_id in the lossless layer.

- Hallucination: model output not supported by evidence in the source. Intelletto

mitigates via: (1)

response_schemaenforcement, (2) evidence required for extracted facts, (3) coverage ledger, (4) temperature 0.1 for factual extraction.

4) Requirements

MUST

- Process resumes through the 12 stages in order (stage map above), respecting pipeline mode (A, B, or C).

- Preserve a lossless page/block capture for every page, even if content is not mapped to structured fields.

- Produce outputs that support round-trip regeneration (at minimum HTML with faithful ordering).

- Emit per-stage pipeline_phase_event records (stage, outcome, error_code, duration).

- Be idempotent and retry-safe: re-running a stage must not duplicate artifacts or costs.

SHOULD

- Use direct PDF upload to Gemini rather than OCR-to-text prompting for extraction.

- Cache extraction results by

checksum_sha256(tenant-scoped) for cost efficiency.

MAY

- Add enrichment sources (e.g., public profile links) only when explicitly configured and always as a distinct stage with its own metering.

5) Implementation steps (the 12 stages)

Stage 01 -- RESUME_REGISTERED

- Create

resume_documentrecord with statusRECEIVED. - Compute

checksum_sha256from PDF bytes. - Record GCS

object_uriand file metadata (mime_type, byte_size, page_count). - Create or link

candidate_profile(from filename inference or prior identity). - Create

parsing_pipeline_runrecord with statusPENDING.

Stage 02 -- DEDUP_CHECK

- Compute deterministic fingerprints: file hash (bytes) + normalized-text hash + page-map hash.

- Search for duplicates: exact-match (SHA256) and near-match (candidate signal matching: email, phone, LinkedIn URL).

- Tenant-scoped queries (dedup is never cross-tenant).

- If duplicate: pipeline terminates with status

DEDUP_SKIPPEDand Gate D records the result. - If new: pipeline continues to Stage 03.

Stage 03 -- OCR_LAYOUT

- Call Google Document AI Enterprise Document OCR to produce page-level text + layout coordinates.

- Build normalized

page_mapwith stable reading order, bbox geometry, and per-block hashes. - Persist

coverage_ledgerrecording page_count_total, page_count_processed. - Gate A (GATE_A_LOSSLESS) validates: observed_pages == expected_pages, every page has blocks.

- Graceful degradation when Document AI is unavailable: extraction proceeds with direct PDF to Gemini (OCR evidence is advisory, not blocking).

Stage 04 -- DATA_CLEANING

- Normalize whitespace, bullet symbols, and hyphenation (without changing meaning).

- Preserve original text in lossless layer; cleaning produces a "clean view," not replacements.

- Identify repeated headers/footers and tag them.

- Pass-through when OCR was skipped (direct PDF mode).

Stage 05 -- STRUCTURED_EXTRACTION

- Direct PDF upload to Gemini 2.5 Flash with

response_schemaparameter. - The schema constrains output at the API decoding level -- skills always return as

{technical: string[], soft: string[]}. - Temperature:

0.1for factual extraction. - Extraction cached by

checksum_sha256scoped pertenant_idfor cost efficiency. - Output:

parsed_resume.extraction_json(JSONB) with person, work history, education, skills, certifications, languages, URLs. - Gate B (GATE_B_SCHEMA) validates extraction schema. Uses

_partition_validation_issues()to separate blocking from advisory. Blocking errors stop pipeline. Status: FIXED (was P0-1: always passed true). - Gate C (GATE_C_EVIDENCE) validates evidence spans. Uses

_validate_extraction_evidence_contract()with per-section 20% density floor. Status: FIXED (was P0-2: only checked list presence).

Stage 06 -- NORMALIZATION

- Normalize raw skill terms against canonical taxonomy: 55,000+ hard skills, 63,000+ aliases, 146 soft skills.

- Match types:

EXACT,FUZZY,SEMANTIC. - Certification-to-skill expansion via

intelletto.certification_skill_maptable. - Track all normalization decisions with confidence + match_type in

intelletto.normalization_result. - Do not overwrite raw terms; store canonical mappings separately.

- Quality alerts written to

intelletto.normalization_quality_alert.

Stage 07 -- DATA_FUSION_ENRICHMENT

- Fetch and parse URLs found in the resume: LinkedIn, GitHub, portfolio, personal websites.

- SSRF protection: DNS resolve + private-IP block, scheme/port allowlist, redirect validation (32 tests).

- Enrichment evidence logged with source, URL, HTTP status, character count to

intelletto.enrichment_audit. - When candidate has websites, they MUST be fetched and parsed. Not parsing is a failure.

- Enrichment results stored in

intelletto.enrichment_payload. - If enrichment fails: skip and proceed. Do not block scoring inputs.

Stage 08 -- VALIDATION_GATES

Five gates control pipeline progression. Each gate has a passed boolean written to intelletto.pipeline_gate_result.

| Gate | Code | What it validates | Status |

|---|---|---|---|

| Gate A | GATE_A_LOSSLESS |

OCR coverage -- page map completeness vs source page count | Working correctly |

| Gate B | GATE_B_SCHEMA |

Extraction schema validity against IIR schema. Now uses _partition_validation_issues() -- blocking errors stop pipeline. |

FIXED (was P0-1) |

| Gate C | GATE_C_EVIDENCE |

Evidence span resolution rate per structured field. Now uses _validate_extraction_evidence_contract() with 20% per-section density floor. |

FIXED (was P0-2) |

| Gate D | GATE_D_DEDUP |

Deduplication result -- stops run as DEDUP_SKIPPED if duplicate |

Working correctly |

| Gate E | GATE_E_ARTIFACTS |

Meta-gate: URL quality, bbox quality, normalization, work history | Working correctly |

Stage 09 -- SCORING_INPUT_BUILD

- Create immutable

intelletto.scoring_input_snapshotrow. - Loads parsed resume, pipeline run, page map, canonical extraction, normalization results, gate status.

- Hard-blocks if required gates are missing or failed.

- Snapshot includes:

normalized_skills_json,work_history_json,education_summary_json,certifications_json,languages_json. - In Mode A (Pool Build): pipeline terminates here with status

POOL_READY.

Stage 10 -- GCS_ARCHIVE_MOVE

- Move source PDF from intake prefix to

RESUME-POOL/processed/in GCS. - Update

resume_document.object_uriwith new location.

Stage 11 -- SCORECARD_GENERATION

- Resolve published

scoring_config_versionfor the JD. - Load JD skill requirements via 3-level fallback:

jd_skill_requirementtable,extracted_features_json,job_description_version.model_json. - Execute 8-bucket weighted scoring model:

- Hard Skills (skill matching with exact/alias/fuzzy tiers)

- Domain/Process (title relevance + skill overlap + industry continuity)

- Scope/Complexity (seniority patterns)

- Tenure/Recency (years + recency + stability)

- Soft/Behavioral (12 soft signals, leadership weighted 1.2x)

- Languages/Communications (English + additional)

- Compliance/Scheduling (completeness + confidence + gate health)

- Education/Certifications (tenure decay model:

e^(-0.18 * max(0, years - 3)))

- Gate evaluation: N_OF_M_SKILLS, MIN_VALUE, MAX_VALUE, BOOLEAN_TRUE, TIMEZONE_OVERLAP.

- Modifiers: data fusion confidence + evidence coverage JD duties.

- Persist:

scorecard_version,bucket_score,modifier_result,scoring_gate_result,scoring_run_metering.

Stage 12 -- RECRUITER_ARTIFACTS

- Build Intelletto Resume snapshot (HTML/PDF/JSON) from extraction data.

- Persist to

intelletto.intelletto_resume_snapshot. - DB fallback for extraction data when orchestrator context is unavailable.

- In Mode A: terminal state is

POOL_READY(notCOMPLETED). - Pipeline run status set to

COMPLETED(Mode B/C) orPOOL_READY(Mode A).

6) Artifacts produced/consumed (what the pipeline produces)

Core canonical artifacts (must exist for every resume)

intelletto.resume_document-- file metadata, GCS URI, checksumintelletto.parsing_pipeline_run-- run lifecycle, status, stagesintelletto.pipeline_phase_event-- per-stage telemetryintelletto.parsed_resume-- extraction_json (JSONB)intelletto.normalization_result-- per-skill canonical mappingsintelletto.pipeline_gate_result-- gate pass/fail per runintelletto.scoring_input_snapshot-- scoring-ready normalized dataintelletto.coverage_ledger-- page/block coverage proof

7) Validation gates (5-gate framework)

Defined in Stage 08 above. Gates A through E with their current implementation status.

8) Failure modes + recovery paths

- Schema invalid JSON (Gate B blocks)

- Recovery: run Repair Prompt once; if still invalid, re-extract with stricter constraints and reduced temperature.

- Coverage ledger fails (missing blocks/pages)

- Recovery: re-run Stage 03 (OCR_LAYOUT) with fallback extractor; do not proceed to Stage 05.

- Evidence density below threshold (Gate C blocks)

- Recovery: re-extract with "evidence required" enforcement or move content to unmapped_content[].

- Cost spike (unexpected retries / OCR invoked)

- Recovery: fail the run with

COST_GATE_EXCEEDEDstatus unlesscost_gate_overrideflag is set.

- Recovery: fail the run with

- Dedup match (Gate D)

- Recovery: pipeline terminates with

DEDUP_SKIPPED. Not a failure -- expected behavior for duplicate resumes.

- Recovery: pipeline terminates with

9) Acceptance tests (how to prove it works)

For any resume, the pipeline MUST prove:

- All 12 stages complete (or subset per pipeline mode) with telemetry in

pipeline_phase_event. - All pages present in page_map (N/N).

- Structured extraction includes major constructs present in the source with evidence links.

- Skills normalized with canonical IDs and confidence scores.

- Scoring input snapshot created with all gates passed.

- Scorecard generated (Mode B/C) with per-bucket scores, evidence, and rubric metadata.

Production verification: 218 documents processed end-to-end, 218/218 scored, 0 failures (April 2026).

Section 1: Architectural Principles

1) Intent

Define the non-negotiable architectural principles that govern every component of the Intelletto resume parsing pipeline -- so developers can implement and extend the system without breaking: (a) schema-first correctness, (b) 100% extraction + regeneration, (c) auditability, and (d) cost-per-resume control.

2) Why it matters (risks mitigated / dependencies unlocked)

These principles prevent the systemic failures that derail AI pipelines:

- Silent loss of content (partial extraction): breaks the "regenerate without source" requirement.

- Unexplainable outputs (no evidence chain): breaks trust and auditability, and blocks downstream scoring explainability.

- Non-deterministic cost (unbounded retries, uncontrolled OCR/enrichment): kills unit economics at scale.

- Data drift (schema and prompt changes degrade quality): breaks regression confidence.

3) Definitions for AI-new developers (if AI concepts appear)

- Schema-first: the

response_schemaparameter is the contract; Gemini's API decoding layer enforces it. Prompts and code must obey it. - Deterministic pipeline: same input + same versions = same outputs, barring upstream extractor changes (tracked via checksums).

- Repair vs re-extract:

- Repair fixes JSON validity/formatting with minimal deviation (no new facts).

- Re-extract re-runs the model on source content (higher cost; tightly controlled).

- Provenance: where a datum came from (source page/block + extraction run id + model id + prompt version).

4) Requirements

MUST (architectural invariants)

MUST-AP-01: Schema governs everything

- The pipeline MUST use

response_schemawith Gemini 2.5 Flash to constrain extraction output at the API decoding level. - A run MUST fail if Gate B detects blocking schema violations -- no partial "acceptance."

MUST-AP-02: Lossless first, structured second

- The pipeline MUST persist a lossless page/block layer for every page.

- Structured fields MUST be derived from lossless content; they MUST NOT replace it.

MUST-AP-03: 100% extraction coverage

- Every page exists in lossless layer.

- Every page has blocks with evidence geometry (bbox) and stable reading order.

- Anything not mapped to structured fields is captured in unmapped_content[] with evidence.

MUST-AP-04: Evidence required for every structured fact

- Gate C enforces per-section 20% evidence density floor via

_validate_extraction_evidence_contract().

MUST-AP-05: Version everything

- Every output MUST include: schema_version, model_id, pipeline_version, and a deterministic run_id.

- Hashes for inputs:

source_sha256,page_map_sha256,cleaned_text_sha256.

MUST-AP-06: Idempotent, retry-safe by design

- Each stage MUST check

intelletto.idempotency_recordbefore writing. - Retries MUST not double-count metering units.

- Extraction cached by

checksum_sha256+tenant_id.

MUST-AP-07: Cost gates are first-class

- Each stage MUST emit

pipeline_phase_eventrecords. - The orchestrator MUST enforce

cost_ceiling_usdper resume/run unless overridden.

MUST-AP-08: Separation of concerns (domain-driven)

- Extraction (Stage 05) MUST NOT do canonicalization/normalization (Stage 06).

- Enrichment (Stage 07) MUST be isolated and optional.

- Scoring (Stage 11) consumes the scoring_input_snapshot produced by Stage 09.

MUST-AP-09: Tenant isolation

- All queries MUST be scoped by

tenant_id. No cross-tenant data leakage. - Dedup is tenant-scoped. Extraction cache is tenant-scoped.

SHOULD (strong guidance)

- Prefer direct PDF upload to Gemini over OCR-to-text prompting.

- Keep temperature low (0.1) and maximize schema constraints.

- Normalize with deterministic rules and database lookups (not LLM normalization).

MAY (optional but allowed)

- Add multiple extraction passes only if metering and gates remain strict and predictable.

5) Implementation steps

- Domain-driven architecture: each pipeline stage is a separate module in

v3/src/domains/pipeline/stages/. - Orchestrator pattern:

v3/src/domains/pipeline/orchestrator.pydrives stage execution.event_orchestrator.pyprovides event-driven alternative with Pub/Sub support. - Always persist lossless page map before calling Gemini (Stage 03 before Stage 05).

- Run structured extraction with response_schema: direct PDF upload, no OCR-to-text prompting.

- Normalize after extraction: Stage 06 uses database lookups against 55K+ hard skills taxonomy.

- Enforce gates in Stage 08: all 5 gates evaluated, results persisted to

pipeline_gate_result.

Section 2: Technology Stack (Gemini 2.5 Flash, Python, FastAPI, Cloud Run, Cloud SQL)

1) Intent

Document the Google-first toolchain used by Intelletto's v3 production pipeline:

- Direct PDF extraction via Gemini 2.5 Flash with

response_schemaenforcement - Lossless evidence capture via Document AI OCR + layout page maps

- Deterministic cost metering via pipeline_phase_event and scoring_run_metering

- PostgreSQL-first persistence via Cloud SQL (psycopg3 async)

- Cloud Run deployment for stateless, auto-scaling execution

2) Why it matters

- Prompt drift without regressions: fixed by

response_schemaenforcement + golden test corpus (31/36 within 5 pts of v2). - Untraceable outputs: fixed by coupling Gemini extraction with Document AI layout page maps.

- Unbounded cost: fixed by extraction caching (checksum-based) and cost_ceiling_usd enforcement.

- Schema inconsistency: CRITICAL -- without

response_schema, Gemini returns skills in inconsistent formats leading to zero-skill failures.

3) Production Stack

| Component | Technology | Details |

|---|---|---|

| LLM | Gemini 2.5 Flash | google-genai Python SDK. Direct PDF upload with response_schema. Temperature 0.1. |

| OCR | Document AI Enterprise Document OCR | Layout extraction for evidence geometry. Processor ID in env var. |

| API Framework | FastAPI (Python 3.11+) | Async/await throughout. Uvicorn server. |

| Database | PostgreSQL 16 on Cloud SQL | Instance: intelletto-ai, region: asia-southeast1, schema: intelletto, ~169 tables. |

| DB Driver | psycopg3 (async) | Connection pool in v3/src/database/pool.py. prepare_threshold=None. |

| Object Storage | Google Cloud Storage | Bucket: intelletto-ai-resume-parse-404886655151. RESUME-POOL prefix structure. |

| Compute | Google Cloud Run | 2 vCPU / 2Gi, 2 workers, min 1 / max 10. Region: asia-southeast1. |

| Batch Processing | Gemini Batch API | v3/src/integrations/google/batch_gemini.py. 50% cost reduction, 200K requests/job, bypasses QPM. |

| Secrets | Google Secret Manager | INTELLETTO_DB_URL, GEMINI_API_KEY, API_KEY, GITHUB_TOKEN, GOOGLE_OAUTH_CLIENT_SECRET |

4) Gemini Extraction Architecture

Key design decision: Direct PDF upload to Gemini 2.5 Flash with response_schema parameter. This is NOT OCR-to-text-to-LLM prompting.

- The PDF binary is uploaded directly as a Gemini content part.

- The

response_schemaparameter forces Gemini to output JSON conforming to the declared schema at the API decoding level. - This eliminates the zero-skill failure mode where skills returned in inconsistent formats.

- Skills ALWAYS return as

{technical: string[], soft: string[]}-- no variation. - Extraction is cached by

checksum_sha256scoped pertenant_id.

Gemini call pattern (conceptual):

# v3/src/integrations/google/gemini.py

from google import genai

client = genai.Client(api_key=api_key)

response = client.models.generate_content(

model="gemini-2.5-flash",

contents=[

{"text": system_instruction},

{"inline_data": {"mime_type": "application/pdf", "data": pdf_bytes}}

],

config={

"temperature": 0.1,

"response_schema": extraction_schema, # enforced at decode

"response_mime_type": "application/json"

}

)5) Database Connection Pattern

# v3/src/database/pool.py

# psycopg3 async pool with prepare_threshold=None (never prepare)

# DEALLOCATE ALL in reset callback

# All handlers use: async with pool.connection() as conn:Section 3: Data Stores and Canonical Persistence Model

1) Intent

Define the canonical persistence model for Intelletto's resume parsing pipeline, including:

- Which artifacts are stored where (GCS vs PostgreSQL)

- The lossless-first storage pattern required for 100% extraction + regeneration

- The response_schema extraction contract (direct PDF to Gemini)

- The Evidence Span Protocol and Page Map Protocol

- The Per-Step Metering via pipeline_phase_event

2) PostgreSQL Schema (intelletto.*)

All tables live in the intelletto schema on Cloud SQL. Key tables:

Pipeline Tables

| Table | Purpose | Key Columns |

|---|---|---|

resume_document |

Raw file metadata + status | resume_document_id, tenant_id, candidate_id, object_uri, checksum_sha256, status (RECEIVED/PROCESSED/POOL_READY/DUPLICATE_REVIEW/PROCESS_FAILED) |

parsing_pipeline_run |

Per-run orchestration state | pipeline_run_id, tenant_id, resume_document_id, status (PENDING/RUNNING/COMPLETED/FAILED/COST_GATE_EXCEEDED/DEDUP_SKIPPED/PARTIAL), current_stage, cost_ceiling_usd |

pipeline_phase_event |

Per-stage telemetry | pipeline_run_id, stage, outcome, error_code, error_message, started_at, completed_at |

parsed_resume |

Structured extraction output | parsed_resume_id, tenant_id, candidate_id, extraction_json (JSONB), evidence_spans_json, confidence_summary_json |

candidate_profile |

Extracted identity | candidate_id, tenant_id, full_name, email, phone, linkedin_url, current_title, seniority |

normalization_result |

Skill canonical mappings | raw_term, canonical_skill_id, canonical_skill_name, match_type (EXACT/FUZZY/SEMANTIC), confidence |

pipeline_gate_result |

Gate pass/fail outcomes | pipeline_run_id, gate_code (GATE_A through GATE_E), passed (boolean), failure_reason, failure_detail (JSONB) |

coverage_ledger |

Per-page OCR coverage | pipeline_run_id, page_count_total, page_count_processed, gate_a_lossless_passed |

scoring_input_snapshot |

Immutable scoring handoff | scoring_input_id, pipeline_run_id, parsed_resume_id, normalized_skills_json, work_history_json, all_gates_passed |

enrichment_payload |

Data fusion results | pipeline_run_id, enrichment data JSONB |

enrichment_audit |

Fetch attempt log | source, url, http_status, char_count, ssrf_blocked |

idempotency_record |

Replay and dedup protection | key, created_at |

Scoring Tables

| Table | Purpose | Key Columns |

|---|---|---|

scorecard_version |

Scored output versions | scorecard_version_id, scoring_config_version_id, candidate_id, score_total, base_fit, modifier_points, status (SCORED/DISQUALIFIED/PENDING/FAILED) |

bucket_score |

Per-bucket scoring breakdown | scorecard_version_id, bucket_id, score, weight, contribution, evidence_json, rubric_applied_json |

modifier_result |

Scoring modifier outputs | scorecard_version_id, modifier_id, points_awarded, basis_json |

scoring_gate_result |

Per-gate evaluation | scorecard_version_id, gate_code, status (PASS/FAIL/SKIPPED), severity (DISQUALIFY/WARN/INFO) |

scoring_config_version |

Scoring config lifecycle | scoring_config_version_id, config_payload (JSONB), status (DRAFT/PUBLISHED/DEPRECATED) |

scoring_run_metering |

Per-scorecard metering | scorecard_version_id, gemini_calls, tokens_in/out, latency breakdowns, db_writes |

Taxonomy Tables

| Table | Purpose | Scale |

|---|---|---|

skill_hard |

Canonical hard skills | 55,000+ skills with category, is_hot_tech, is_emerging |

skill_soft |

Canonical soft skills | 146 skills with source_framework |

skill_alias |

Alias-to-skill mappings | 63,000+ aliases with confidence |

vendor_certification |

Certification taxonomy | cert_name, cert_type (CERTIFICATION/SPECIALIZATION), vendor |

certification_skill_map |

Cert-to-skill expansion | cert_name to skill_name with skill_dimension (HARD/SOFT), confidence_boost |

3) Google Cloud Storage Layout

gs://intelletto-ai-resume-parse-404886655151/

RESUME-POOL/

{JD_CODE}/ # Resumes assigned to a JD

CV - Name - Title.pdf

unassigned/ # Resumes without JD assignment

resume.pdf

processed/ # Archived after pipeline completion

{document_id}.pdfImmutability rule: Objects are write-once. Archive moves use GCS copy + delete. Source URIs updated in resume_document.object_uri.

Section 4: End-to-End Workflow

1) Intent

Define the end-to-end execution workflow for the v3 pipeline, from ingest through scored output. This section specifies the orchestration, stage dependencies, and mode-specific behavior.

2) Orchestration

Two orchestrator implementations exist:

- Synchronous orchestrator (

v3/src/domains/pipeline/orchestrator.py): drives stages sequentially within a single request. Used for Mode C (Direct JD) and Mode A (Pool Build). - Event-driven orchestrator (

v3/src/domains/pipeline/event_orchestrator.py): supports Pub/Sub-driven stage execution with MODE_STAGES filtering and retry semantics. CurrentlyPUBSUB_ENABLED=false(event orchestrator has parity but hasn't been live-tested with Pub/Sub).

3) Mode A -- Pool Build (Stages 01-06, 08-09)

- Stage 01 RESUME_REGISTERED: Create resume_document (RECEIVED), candidate_profile, pipeline_run (PENDING).

- Stage 02 DEDUP_CHECK: Tenant-scoped SHA256 + signal matching. If duplicate, terminate with DEDUP_SKIPPED.

- Stage 03 OCR_LAYOUT: Document AI OCR for layout evidence. Gate A validates page coverage.

- Stage 04 DATA_CLEANING: Normalize whitespace, tag headers/footers. Pass-through if OCR skipped.

- Stage 05 STRUCTURED_EXTRACTION: Direct PDF to Gemini 2.5 Flash with response_schema. Cache by checksum + tenant. Gates B + C validated.

- Stage 06 NORMALIZATION: 55K+ hard skills, 63K+ aliases, 146 soft skills. EXACT/FUZZY/SEMANTIC matching.

- Stage 08 VALIDATION_GATES: All 5 gates evaluated, results persisted.

- Stage 09 SCORING_INPUT_BUILD: Create immutable scoring_input_snapshot. Pipeline terminates with POOL_READY.

4) Mode B -- Pool Activation (Stages 07, 10-12)

- Stage 07 DATA_FUSION_ENRICHMENT: Fetch LinkedIn, GitHub, portfolio URLs. SSRF-protected.

- Stage 10 GCS_ARCHIVE_MOVE: Move PDF to processed/ prefix.

- Stage 11 SCORECARD_GENERATION: 8-bucket weighted scoring against assigned JD.

- Stage 12 RECRUITER_ARTIFACTS: Build Intelletto Resume (HTML/PDF/JSON). Status becomes COMPLETED.

5) Mode C -- Direct JD (Stages 01-12)

All 12 stages execute sequentially. Resume arrives with a JD assignment (via GCS directory path or explicit assignment). No intermediate POOL_READY state.

6) Stage Dependencies and Data Flow

01 RESUME_REGISTERED

|-> resume_document, candidate_profile, pipeline_run

02 DEDUP_CHECK

|-> dedup_cluster (if match -> DEDUP_SKIPPED, stop)

03 OCR_LAYOUT

|-> coverage_ledger, page_map (Gate A)

04 DATA_CLEANING

|-> cleaned page_map

05 STRUCTURED_EXTRACTION

|-> parsed_resume.extraction_json (Gates B, C)

06 NORMALIZATION

|-> normalization_result rows

08 VALIDATION_GATES

|-> pipeline_gate_result rows (all 5 gates)

09 SCORING_INPUT_BUILD

|-> scoring_input_snapshot (Mode A stops here: POOL_READY)

07 DATA_FUSION_ENRICHMENT

|-> enrichment_payload, enrichment_audit

10 GCS_ARCHIVE_MOVE

|-> updated object_uri

11 SCORECARD_GENERATION

|-> scorecard_version, bucket_score, modifier_result, scoring_gate_result

12 RECRUITER_ARTIFACTS

|-> intelletto_resume_snapshot (COMPLETED)Section 5: API Contracts

1) Intent

Define the API contracts for the v3 resume parsing pipeline. The v3 system uses domain-driven route organization.

2) v3 API Routes

Pipeline Endpoints

| Method | Path | Purpose |

|---|---|---|

| POST | /api/v3/pipeline/process |

Trigger pipeline processing for a document |

| POST | /api/v3/pipeline/rescore/{document_id} |

Re-score a document (append-only, new scorecard_version) |

| POST | /api/v3/pipeline/rescore/bulk |

Bulk re-score multiple documents |

| GET | /api/v3/pipeline/latency-report |

Pipeline latency SLA dashboard data |

| GET | /api/v3/health |

Health check (DB connectivity, service status) |

Intake Endpoints

| Method | Path | Purpose |

|---|---|---|

| POST | /api/v1/intake/documents/upload |

Browser upload |

| POST | /api/v1/intake/documents/import_gcs_prefix |

Bulk GCS import from RESUME-POOL |

| GET | /api/v1/intake/aggregator/documents |

List received resumes |

| GET | /api/v1/intake/intelletto_resumes |

List parsed resumes |

| POST | /api/v1/intake/aggregator/ai_jd_assignment |

AI-powered JD assignment (detects JD code from filename/GCS path) |

Scoring Endpoints

| Method | Path | Purpose |

|---|---|---|

| POST | /api/v1/scoring/scorecards |

Generate scorecard for a resume against a JD |

| GET | /api/v3/scoring/interview-brief/{id} |

Evidence-derived interview probes |

| GET | /api/v3/scoring/comp-inference |

Compensation inference (P25/P50/P75) |

| GET | /api/v3/scoring/jd-calibration/{id} |

JD difficulty calibration |

| POST | /api/v3/scoring/decision |

Recruiter feedback signal loop |

Candidate Endpoints

| Method | Path | Purpose |

|---|---|---|

| GET | /api/v3/candidates/{id}/authenticity |

Resume authenticity scoring |

| GET | /api/v3/candidates/{id}/sector-profile |

Industry/sector classification |

| GET | /api/v3/candidates/{id}/bias-audit |

Bias audit (advisory only) |

| GET | /api/v3/candidates/{id}/cross-jd-fit |

Multi-JD cross-fit analysis |

| POST/GET | /api/v3/candidates/{id}/cover-letter |

Cover letter + sentiment analysis |

3) Internal Stage Execution

Pipeline stages are NOT exposed as individual HTTP endpoints in v3. Instead, the EventDrivenOrchestrator (or synchronous orchestrator) calls stage functions directly within the same process. Stage results flow through an orchestrator context dictionary.

The v2 internal endpoints (/internal/v1/...) are retained for backward compatibility but all new pipeline execution uses the v3 orchestrator pattern.

Section 6: Cost Model and Metering

1) Intent

Define the metering and cost model for the pipeline so that cost-per-resume is deterministic and auditable.

2) Metering Implementation

Metering is implemented via two tables:

intelletto.pipeline_phase_event: per-stage telemetry for every pipeline run. Records stage, outcome, error_code, error_message, started_at, completed_at, and computed duration.intelletto.scoring_run_metering: per-scorecard metering. Records gemini_calls, gemini_tokens_in/out, gate_evaluations, bucket_computations, modifier_computations, db_writes, and latency breakdowns (load_inputs, gates, buckets, modifiers, persist, total).

3) Cost Control

- Extraction caching: Results cached by

checksum_sha256+tenant_id. Duplicate documents skip Gemini entirely. - Cost ceiling:

parsing_pipeline_run.cost_ceiling_usdenforced per run. Override viacost_gate_overrideflag. - Batch processing:

v3/src/integrations/google/batch_gemini.pyprovides 50% cost reduction for bulk workloads via Gemini Batch API. - Budget stop: If

cost_ceiling_usdexceeded, run terminates with statusCOST_GATE_EXCEEDED. Partial artifacts preserved.

Section 7: Quality Metrics

1) Intent

Define the required quality metrics that prove the pipeline meets the prime directive: strict schema compliance, 100% extraction coverage, evidence traceability, and operational robustness.

2) Core Metrics

- M1 -- schema_valid: Gate B passes (extraction schema valid, blocking errors absent).

- M2 -- coverage_complete: Gate A passes (all pages captured in lossless layer).

- M3 -- evidence_density: Gate C passes (20% per-section fact density floor).

- M4 -- normalization_coverage: Percentage of raw terms with canonical matches.

- M5 -- pipeline_success_rate: Ratio of COMPLETED runs to total runs.

- M6 -- scoring_completion_rate: Ratio of scored documents to parseable documents.

3) Production Results

- Pipeline success rate: 218/218 documents completed (100%) in April 2026 trial.

- Scoring completion rate: 218/218 scored (100%), 0 failures.

- Golden corpus: 31/36 resumes within 5 points of v2 scoring baseline.

- Test suite: 747 tests passing, 0 failures.

4) Regression Testing

Golden test corpus at v3/goldens/ provides regression baseline. The v3 test suite covers:

- Contract gate tests (schema validation, evidence contract)

- Contact URL recall tests

- Intelletto resume lossless tests

- Process QA tests

- Pipeline validation tests

- Scoring runtime tests

- Batch Gemini tests

Section 8: Error Handling, Retries, and Safe Degradation

1) Intent

Specify the error-handling contract for the resume pipeline so that runs are idempotent, retry-safe, repair-capable, and degradable (never lose content).

2) Pipeline Run Status Machine

| Status | Meaning |

|---|---|

PENDING |

Run created, not yet started |

RUNNING |

Active stage execution |

COMPLETED |

All stages passed, scorecard generated |

POOL_READY |

Mode A: parsed and normalized, awaiting JD assignment |

FAILED |

Unrecoverable error |

COST_GATE_EXCEEDED |

Budget exceeded, partial artifacts preserved |

DEDUP_SKIPPED |

Duplicate detected, pipeline stopped (not a failure) |

PARTIAL |

Some stages completed, run interrupted |

3) Error Taxonomy

- Input errors (permanent): PDF unreadable, unsupported format. Recovery: fail fast.

- Auth errors (permanent until fixed): Gemini API key invalid, IAM denied. Recovery: fail fast.

- Rate limits (transient): 429 RESOURCE_EXHAUSTED. Recovery: exponential backoff.

- Service availability (transient): 503/504, timeouts. Recovery: retry.

- OCR failures: Document AI partial pages. Recovery: retry once; if still partial, extraction proceeds with direct PDF to Gemini (graceful degradation).

- LLM output failures: JSON invalid or schema mismatch. Recovery: repair once, re-extract once, then fail.

- Persistence failures: DB connection (transient: retry) or constraint violation (permanent: fail).

4) Idempotency Pattern

# Every pipeline write checks idempotency_record first

existing = await db.fetchrow(

"SELECT id FROM intelletto.idempotency_record WHERE key = $1",

idempotency_key

)

if existing:

return {"status": "already_processed", "id": str(existing["id"])}5) Concurrency Guard

Pipeline runs use FOR UPDATE SKIP LOCKED with a 30-minute stale threshold to prevent concurrent execution of the same document.

Section 9: Security, Privacy, and Compliance

1) Tenant Isolation

- All database queries are scoped by

tenant_id. No cross-tenant data leakage. - Dedup queries are tenant-scoped.

- Extraction cache is scoped by

tenant_id+checksum_sha256. - All sensitive v1 routes require

tenant_idparameter (422 without).

2) SSRF Protection (Data Fusion)

- DNS resolve + private-IP block before fetching any candidate URL.

- Scheme allowlist (http/https only), port allowlist.

- Redirect validation (no redirects to private IPs).

- 32 tests covering SSRF protection scenarios.

3) Authentication

- Pipeline worker requires OIDC Bearer token or API key (not User-Agent).

- Google OAuth configured for

api.intelletto.ai. - API key authentication for programmatic access.

4) Data Classification

- All resume documents classified as

PII_HIGHby default. resume_document.pii_classificationfield tracks classification.retention_policy_idandpurge_atfields support data lifecycle management.

Section 10: Scoring Engine (8-Bucket Weighted Model)

1) Intent

Document the scoring engine that evaluates candidates against job descriptions using an 8-bucket weighted model with gates and modifiers.

2) Scoring Flow

- Config resolution: Load published

scoring_config_versionfor the JD. - Input loading: Load

scoring_input_snapshot+ JD skill requirements (3-level fallback). - Gate evaluation: Run gates first. If a DISQUALIFY gate fails, scoring stops.

- Bucket scoring: 8 buckets scored independently via

ScorerRegistry. - Base fit: Weighted sum of bucket scores (enabled bucket weights sum to 1.0).

- Modifier computation: Data fusion confidence + evidence coverage adjust +/- points.

- Final score: Base fit + modifier points, clamped 0..100.

- Classification: STRONG / BORDERLINE / RISK with ADVANCE / REVIEW / HOLD recommendation.

3) 8 Scoring Buckets

| # | Bucket | Formula |

|---|---|---|

| 1 | Hard Skills | required_match_rate * 75% + nice_to_have_rate * 25% + quality_bonus (up to +8). Match tiers: exact=1.0, alias>=0.85, fuzzy>=0.65. |

| 2 | Domain/Process | title_relevance*50% + skill_domain_overlap*30% + industry_continuity*20% |

| 3 | Scope/Complexity | seniority_score*60% + scope_signals*40%. Chief/VP=100, Director=90, Senior=80, Manager=75, Junior=35, Intern=20. |

| 4 | Tenure/Recency | years_score*40% + recency_score*35% + stability_score*25% |

| 5 | Soft/Behavioral | 12 soft signals with leadership/problem_solving weighted 1.2x |

| 6 | Languages/Communications | english_score*60% + additional_languages*40% |

| 7 | Compliance/Scheduling | completeness*50% + norm_confidence*30% + gate_health*20% |

| 8 | Education/Certifications | Education tenure decay: e^(-0.18 * max(0, years - 3)). Seniority-aware: Junior (degree 60%, skills 40%) to Executive (degree 5%, skills 95%). Cert bonus capped at 0.12. Combined: education 55% + cert matching 35% + cert-expanded skill bonus 10%. |

4) Scoring Config Lifecycle

DRAFT: editable, not used for scoring.PUBLISHED: immutable, active for scoring. Enabled bucket weights must sum to 1.0.DEPRECATED: archived, not used for new scoring.

5) JD Skill Requirements Loading

Three-level fallback ensures skills are available for scoring:

intelletto.jd_skill_requirementtable (structured, preferred)job_description_version.extracted_features_json(legacy)job_description_version.model_json(JD Orchestrator)

Section 11: Acceptance Criteria

1) Pipeline Acceptance

- All 12 stages complete (or mode-appropriate subset) for every input document.

- All 5 validation gates evaluated and persisted.

- Pipeline phase events recorded for every executed stage.

- Scoring input snapshot created with all gates passed.

2) Extraction Acceptance

- Skills always returned as

{technical: string[], soft: string[]}(response_schema enforced). - No zero-skill candidates (schema enforcement prevents this).

- Evidence density >= 20% per structured section (Gate C).

3) Scoring Acceptance

- 8 bucket scores computed with evidence and rubric metadata.

- Per-bucket contribution = score * weight.

- Base fit = sum of contributions.

- Modifiers applied within budget.

- Final score clamped 0..100.

4) Lossless Resume Output Constraints

The lossless spec defines what every generated Intelletto resume must contain. Non-negotiable:

- NEVER produce: "No detailed achievements mapped in this parse." -- this is always a defect.

- NEVER truncate experience sections for any reason.

- NEVER omit advisory roles, even those outside the main employment timeline.

- NEVER omit education or certifications -- if absent from source, write "Not captured in source document".

- The Intelletto Resume word count must always exceed the source resume word count.

5) Test Suites

| Suite | Location | Count |

|---|---|---|

| v3 tests | v3/tests/ |

101+ tests |

| v2 contract gates | tests/test_contract_gates_unittest.py |

Gate B, Gate C, validation tests |

| Contact URL recall | tests/test_contact_url_recall_unittest.py |

URL extraction accuracy |

| Lossless resume | tests/test_intelletto_resume_lossless_unittest.py |

Output completeness |

| Process QA | tests/test_process_qa_unittest.py |

End-to-end pipeline QA |

| Pipeline validation | tests/test_pipeline_validation_unittest.py |

Stage validation |

| Scoring runtime | tests/test_scoring_runtime_unittest.py |

Scorer registry, bucket dispatch |

| Batch Gemini | tests/test_batch_gemini_unittest.py |

Batch API integration |

| Total | 747 tests passing |

Section 12: Extraction Schema

1) response_schema Enforcement

The v3 pipeline uses Gemini 2.5 Flash with response_schema parameter. This constrains model output at the API decoding level, meaning the JSON structure is guaranteed by the API, not by post-hoc validation.

2) Key Schema Shape

{

"basics": {

"name": "string",

"email": "string",

"phone": "string",

"location": "string",

"urls": ["string"]

},

"work_history": [

{

"title": "string",

"company": "string",

"start_date": "string",

"end_date": "string",

"achievements": ["string"]

}

],

"education": [

{

"school": "string",

"degree": "string",

"field": "string",

"graduation_date": "string"

}

],

"skills": {

"technical": ["string"], // ALWAYS this shape

"soft": ["string"] // ALWAYS this shape

},

"certifications": [

{

"name": "string",

"issuer": "string",

"date": "string"

}

],

"languages": [

{

"language": "string",

"proficiency": "string"

}

]

}Critical: The skills field ALWAYS returns as {technical: string[], soft: string[]}. Without response_schema enforcement, Gemini would return skills in inconsistent formats (flat array, nested objects, comma-separated strings) leading to zero-skill failures in normalization and scoring.

3) parsed_resume.extraction_json

The full extraction output is stored in intelletto.parsed_resume.extraction_json as a JSONB column. This is the canonical extraction artifact for the pipeline run.

Section 13: Development Environment Setup

1) Prerequisites

- Python 3.11+

- Google Cloud SDK (

gcloud) - Access to Intelletto Google Cloud project

2) Local Development

# Clone and setup

cd Intelletto

python -m venv .venv

source .venv/bin/activate

pip install -r v3/requirements.txt

# Start v3 server (port 8080)

cd v3

uvicorn main:app --port 8080 --reload

# Or use the startup script

./start_services.sh3) Database Access

The database is remote Cloud SQL (not local). Never attempt local postgres commands.

# Cloud SQL instance

Instance: intelletto-ai

Region: asia-southeast1

Schema: intelletto

# Connection via Cloud SQL Proxy (for local dev)

cloud_sql_proxy -instances=<project>:asia-southeast1:intelletto-ai=tcp:5432

# Or set INTELLETTO_DB_URL env var directly4) GCS Bucket

GCS_BUCKET=intelletto-ai-resume-parse-404886655151

# Resume intake path

gs://intelletto-ai-resume-parse-404886655151/RESUME-POOL/

# Processed archive path

gs://intelletto-ai-resume-parse-404886655151/RESUME-POOL/processed/5) Deployment

# Build and deploy to Cloud Run

gcloud builds submit --config v3/cloudbuild.yaml

gcloud run deploy intelletto-api \

--region asia-southeast1 \

--image <image> \

--min-instances 1 \

--max-instances 10 \

--cpu 2 \

--memory 2Gi6) Running Tests

# v2 contract tests (always use .venv/bin/python)

./.venv/bin/python -m pytest tests/test_contract_gates_unittest.py \

tests/test_contact_url_recall_unittest.py \

tests/test_intelletto_resume_lossless_unittest.py \

tests/test_process_qa_unittest.py -q

# v3 tests

./.venv/bin/python -m pytest v3/tests/ -q

# Expected: all tests pass (747 total)7) Environment Variables

| Variable | Purpose |

|---|---|

INTELLETTO_DB_URL |

Cloud SQL connection string |

GEMINI_API_KEY |

Google Gemini API key |

API_KEY |

Intelletto API authentication key |

GCS_BUCKET |

GCS bucket name (intelletto-ai-resume-parse-404886655151) |

GOOGLE_CLOUD_PROJECT |

Google Cloud project ID |

GOOGLE_DOCUMENT_AI_PROCESSOR_ID |

Document AI processor ID |

GOOGLE_DOCUMENT_AI_LOCATION |

Document AI processor location |

AUTH_ENABLED |

Enable/disable authentication (true for production) |

PUBSUB_ENABLED |

Enable event-driven orchestrator (currently false) |

Section 14: Data Model -- Entities and Relationships

1) Core Entity Relationships

resume_document (1) -----> (N) parsing_pipeline_run

| |

+-> candidate_profile (1:1) +-> pipeline_phase_event (1:N)

+-> pipeline_gate_result (1:N)

+-> coverage_ledger (1:1)

+-> parsed_resume (1:1)

| +-> normalization_result (1:N)

+-> scoring_input_snapshot (1:1)

+-> scorecard_version (1:N)

+-> bucket_score (1:N)

+-> modifier_result (1:N)

+-> scoring_gate_result (1:N)

+-> scoring_run_metering (1:1)2) Key Foreign Key Chains

resume_document->candidate_profile(via candidate_id)parsing_pipeline_run->resume_document(via resume_document_id)parsed_resume->parsing_pipeline_run(via parsing_job_id)scoring_input_snapshot->parsed_resume(via parsed_resume_id)scorecard_version->scoring_input_snapshot(via scoring_input_id)resume_document_job_map->resume_document+job_requisition

3) Key JSONB Shapes

extraction_json (in parsed_resume)

{

"basics": {"name": "...", "email": "...", "phone": "...", "urls": [...]},

"work_history": [{"title": "...", "company": "...", "dates": {...}, "achievements": [...]}],

"education": [{"school": "...", "degree": "...", "field": "..."}],

"skills": {"technical": ["..."], "soft": ["..."]},

"certifications": [{"name": "...", "issuer": "...", "date": "..."}],

"languages": [{"language": "...", "proficiency": "..."}]

}normalized_skills_json (in scoring_input_snapshot)

[

{"raw_term": "ReactJS", "canonical_id": "uuid", "canonical_name": "React", "match_type": "EXACT", "confidence": 0.95},

{"raw_term": "K8s", "canonical_id": "uuid", "canonical_name": "Kubernetes", "match_type": "FUZZY", "confidence": 0.88}

]config_payload (in scoring_config_version)

{

"gates": [{"gateId": "...", "name": "...", "severity": "DISQUALIFY|WARN|INFO", "rule": {...}}],

"buckets": [{"bucketId": "...", "name": "...", "weight": 0.25, "enabled": true}],

"modifiers": [{"modifierId": "...", "name": "...", "minPoints": -5, "maxPoints": 5}],

"modifiersBudgetPoints": 10

}Appendix A: Known Gaps and Remaining Work

A.1 Resolved P0 Gaps

| ID | Gap | Resolution |

|---|---|---|

| P0-1 | Gate B always passed true | FIXED. _partition_validation_issues() separates blocking from advisory. Gate B fails on blocking errors. Intake stops pipeline on failure. 6 new tests. |

| P0-2 | Gate C only checked list presence | FIXED. _validate_extraction_evidence_contract() checks per-section fact coverage with 20% density floor. 9 new tests. |

A.2 Partial Resolution

| ID | Gap | Status |

|---|---|---|

| P0-3 | Bucket dispatch + rubric persistence | PARTIAL. Dispatch now via ScorerRegistry (exact match, not substring). rubric_applied_json has real scorer_id/input_hash/evidence_hash. Remaining: scorers are inline heuristics, not configurable rubric rules. 16 tests. |

A.3 External Dependencies Not Yet Integrated

- GitHub API integration: Requires GitHub API token for structured repository analysis.

- Proxycurl for LinkedIn: Requires Proxycurl API key for LinkedIn profile parsing.

- PII Redaction Pre-LLM: Blocked -- direct PDF to Gemini makes pre-LLM redaction architecturally difficult.

A.4 Enhancement Features Delivered

All delivered in v3, live on Cloud Run:

- Re-scoring API (single + bulk, append-only)

- Interview Brief Generator (evidence-derived, no Gemini)

- Resume Authenticity Scoring

- Industry/Sector Classification (15 NAICS-style sectors)

- Bias Audit (advisory only)

- Compensation Inference (P25/P50/P75)

- JD Difficulty Calibration

- Recruiter Feedback Signal Loop

- Multi-JD Cross-JD Auto-Fit

- Pipeline Latency SLA Dashboard

- Cover Letter + Sentiment Analysis

- AI JD Generator (Gemini-powered)